When subagents take the wheel

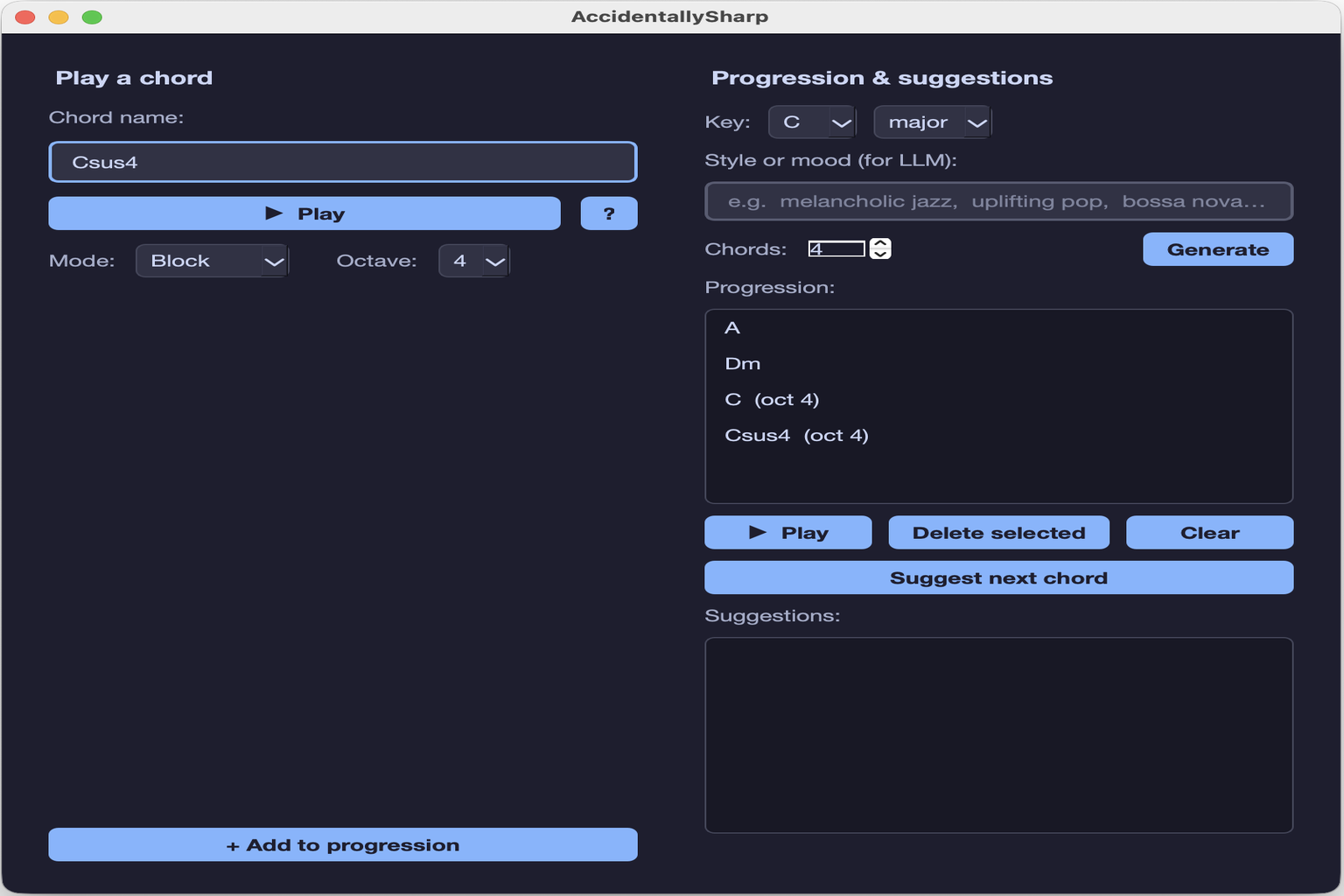

AccidentallySharp is a desktop application that lets music beginners explore chord progressions with the help of an AI assistant. But this article is not really about music. It is about how the application was built — specifically, about putting Claude subagents to work as a development team, each one owning a precise slice of the problem domain.

The idea is straightforward: instead of asking a single general-purpose AI to write an entire codebase, you define a set of specialized agents, give each one a focused role and a bounded scope, and let them collaborate. AccidentallySharp became the test bench for this approach. Five agents were configured inside the project repository under .claude/agents/, covering music theory, harmonic progressions, audio and MIDI, code review, and test generation. Each agent knew exactly what it was responsible for and, equally important, what it was not.

What follows is an account of how that team was assembled, how the responsibilities were divided, and what the resulting code looks like — from the strict four-layer architecture that enforces the boundaries, to the multi-provider LLM integration that the app uses at runtime to suggest the next chord in a progression.

The domain agents: music knowledge encoded as context

Two of the five agents carry music domain knowledge. Their job is not to write general Python — it is to make sure that every piece of code touching music theory or harmonic logic is correct according to the rules of the discipline.

The chord-theory-expert owns everything related to chord structure: nomenclature, parsing rules, interval formulas, enharmonic handling, and the many edge cases that make chord notation surprisingly tricky. It knows, for instance, that C7 is a dominant seventh — not a major triad with a natural seventh extension — and that Cdim7 uses a doubly-flatted seventh that is not the same as a half-diminished chord. These are not implementation details; they are domain invariants. Having a dedicated agent for them means the parser in theory/parser.py reflects actual music theory rather than a programmer’s approximation of it. The result is a regex-based tokenizer that matches quality tokens longest-first to avoid prefix collisions, infers implied extensions from jazz convention, and deduplicates redundant degrees at the end of each parse.

The harmonic-progressions-expert covers the layer above individual chords: how sequences of chords relate to a key, what makes a suggestion musically plausible for a beginner, and how to structure the context sent to the runtime LLM. It is responsible for the validation logic in llm/validator.py — a three-tier acceptance filter that classifies each LLM suggestion as diatonic, borrowed, or rejected — and for the harmonic context object in llm/context_builder.py that packages key, progression history, current chord, and style hint into the JSON payload the model receives. The boundary between these two agents is deliberate: chord-theory-expert thinks in individual chords; harmonic-progressions-expert thinks in progressions.

The technical agents: engineering discipline by design

The remaining three agents handle the engineering side of the project. They do not know anything about music — they know about code quality, audio plumbing, and test coverage.

The chord-to-audio-expert is responsible for everything between a Chord object and an audible sound: MIDI note mapping, FluidSynth API usage, voicing strategy, timing constants, and OS audio driver selection. It produced the close-voicing algorithm in audio/midi.py that stacks notes upward from the root — bumping each note up by an octave whenever it would land below the previous one — and the thread-safe playback engine in audio/player.py that runs block and arpeggio modes without blocking the UI. It also knows the platform differences: CoreAudio on macOS, ALSA on Linux, DirectSound on Windows.

The code-reviewer enforces the architectural contract. The project runs on a strict four-layer rule: theory imports nothing, llm and audio import only from theory, ui is the only layer allowed to import from all others. No FluidSynth calls outside audio/, no LLM API calls outside llm/, no PyQt6 imports outside ui/. The code-reviewer’s role is to catch any violation of these rules before it becomes load-bearing. It also checks Python quality, correct usage of the FluidSynth and LLM APIs, and domain correctness at the boundary between layers.

The test-generator produces pytest cases for the theory layer, the MIDI mapping, and the LLM validation logic. Crucially, it operates under a hard constraint: no test may require audio hardware or live API credentials. This keeps the test suite runnable in CI and on any developer machine without setup. The result is a set of parametrized tests that verify chord parsing edge cases, pitch class arithmetic, close-voicing correctness, and the three-tier validation filter — all deterministic, all offline.

The LLM at runtime: a harmonic advisor, not a code generator

Once the application is running, the LLM plays a second, completely different role: it becomes a musical advisor. The user builds a chord progression by clicking through a virtual piano interface, and at any point can ask the app to suggest what chord might come next — or hand over entirely and have a full progression generated from a mood description. Both features are served by the llm/ layer. The key design decision is what to send to the model. Rather than passing raw chord names, llm/context_builder.py assembles a structured JSON object containing the current key and mode, the last three or four chords in the progression, the chord the user is currently on, and a style hint such as ‘pop’ or ‘jazz’. This gives the model enough harmonic context to reason about tonal function without overwhelming the prompt with irrelevant detail. The response comes back as a JSON list of suggestions, each with a chord name, a scale degree, and a plain-language reason. Before any suggestion reaches the UI it passes through the three-tier filter in llm/validator.py: diatonic chords are shown unconditionally, known borrowed chords — such as the flat-VII in a major key or the major IV in a minor key — are shown with a label, and anything else is silently rejected and retried. A suggestion identical to the current chord is also rejected, since repeating the same chord is not a progression. The provider abstraction in llm/providers.py means the user can point the app at any of four backends at startup: OpenAI, Anthropic, a local Ollama instance, or LM Studio. Ollama and LM Studio share an OpenAI-compatible base class because they expose the same chat completions endpoint; Anthropic uses its own SDK. The rest of the application never knows which provider is active — it calls get_chord_suggestions() and receives a validated list regardless of what is on the other end.

Under the hood: a four-layer architecture with strict boundaries

The codebase is organised into four layers with import rules that are enforced as hard constraints, not guidelines. theory/ is pure Python with no external dependencies and no I/O — it only knows about chords, intervals, and enharmonic spelling. llm/ and audio/ each import from theory/ and nowhere else. ui/ is the only layer allowed to import from all others. This structure makes each subsystem independently testable and prevents the kind of coupling that accumulates silently when there is no enforced boundary. The central data type is the Chord dataclass defined in theory/chord.py. It separates pitchClass — an integer from 0 to 11 used for all arithmetic — from spelling, the string that preserves the user’s enharmonic choice for display. A C# and a Db are the same pitch class but different spellings, and the model carries both without conflating them. Extensions are represented as degree plus alteration pairs, which means the same code handles natural, flatted, and sharpened extensions without special cases. The parser in theory/parser.py works in three left-to-right passes: extract the slash bass note if present, match the root, then consume quality and extension tokens. Quality tokens are tried longest-first to prevent prefix collisions — maj7 must be matched before maj, and maj before M. Several jazz conventions are hard-coded: C7 produces a dominant seventh, C9 implies a minor seventh underneath, and Cadd9 explicitly excludes the seventh. These rules were provided by the chord-theory-expert agent and would be easy to get wrong without dedicated domain knowledge. Pitch class sets are computed in theory/intervals.py using modular arithmetic over static lookup tables. Each quality maps to a list of semitone offsets from the root; extensions add further offsets with alteration applied before the modulo. The result is a set of integers, which naturally deduplicates pitch classes that different extensions happen to share. Audio playback in audio/player.py uses a threading.Lock to protect all FluidSynth calls and a background daemon thread per note in arpeggio mode, each sleeping independently before releasing its note. The UI thread never blocks. The close-voicing algorithm in audio/midi.py builds a chord from the bottom up, bumping each candidate note up by an octave whenever it would fall at or below the previous note — a simple loop that produces musically idiomatic voicings without any look-ahead.

Getting started: installation and launch

AccidentallySharp requires Python 3.11 or higher and FluidSynth, a system-level audio synthesis library that must be installed separately before the Python dependencies. On macOS it is a single Homebrew command; on Ubuntu or Debian a single apt command; on Windows it requires a manual download from fluidsynth.org and adding the binary to the system PATH. Once FluidSynth is present, the rest of the setup is standard:

# macOS

brew install fluid-synth

# Ubuntu / Debian

sudo apt install fluidsynth

# Clone and install Python dependencies

git clone https://github.com/ettoremessina/AccidentallySharp.git

cd AccidentallySharp

python -m venv venv

source venv/bin/activate

pip install -r requirements.txtThe application is launched with python main.py. The –llm flag selects the provider; if omitted it defaults to OpenAI and expects an OPENAI_API_KEY environment variable. To use Anthropic’s Claude, pass –llm anthropic and set ANTHROPIC_API_KEY. For a fully local setup with no API keys or network traffic, Ollama and LM Studio are both supported via –llm ollama and –llm lmstudio respectively, with optional –llm-model, –ollama-url, and –lmstudio-url flags to override the defaults:

# OpenAI (default)

export OPENAI_API_KEY=sk-...

python main.py

# Anthropic Claude

export ANTHROPIC_API_KEY=sk-ant-...

python main.py --llm anthropic

# Local Ollama

python main.py --llm ollama --llm-model llama3

# LM Studio

python main.py --llm lmstudio --llm-model mistralai/ministral-3b --lmstudio-url http://localhost:1234/v1Download the Complete Code

The complete code is available at GitHub. This program is written using subagents of Claude Code with Sonnet 4.6 as model inside the VSCode IDE.

These materials are distributed under MIT license; feel free to use, share, fork and adapt these materials as you see fit.

Also please feel free to submit pull-requests and bug-reports to this GitHub repository or contact me on my social media channels available on the contact page.

FAQ

What is AccidentallySharp?

AccidentallySharp is a desktop application that helps music beginners explore and build chord progressions. It provides a virtual piano interface, playback in block or arpeggio mode, and AI-powered suggestions for the next chord in a progression.

What role did Claude subagents play in building this application?

Five specialized Claude agents were configured in the project repository, each owning a specific domain: chord theory, harmonic progressions, audio and MIDI, code review, and test generation. Rather than using a single general-purpose assistant, each agent worked within a bounded scope, contributing focused expertise to its layer of the codebase.

What are the domain agents responsible for?

The chord-theory-expert handles chord nomenclature, parsing rules, interval formulas, and enharmonic edge cases. The harmonic-progressions-expert covers progression logic, diatonic validation, borrowed chord recognition, and the structure of the harmonic context sent to the runtime LLM.

What are the technical agents responsible for?

The chord-to-audio-expert handles MIDI mapping, FluidSynth integration, voicing algorithms, and OS audio driver selection. The code-reviewer enforces the four-layer architectural contract and checks for domain correctness at layer boundaries. The test-generator produces pytest cases that run entirely offline, without audio hardware or live API credentials.

How does the four-layer architecture work?

The codebase is divided into four layers with strict import rules: theory/ has no external dependencies; llm/ and audio/ import only from theory/; ui/ is the only layer allowed to import from all others. No FluidSynth calls are permitted outside audio/, no LLM API calls outside llm/, and no PyQt6 imports outside ui/.

How does the application use an LLM at runtime?

When the user asks for a chord suggestion, the app sends a structured JSON context to the LLM containing the current key, the last few chords, the current chord, and a style hint. The LLM returns a list of suggestions with reasons, which are then filtered through a three-tier validator before being shown in the UI.

Which LLM providers are supported at runtime?

AccidentallySharp supports OpenAI (default, gpt-4o-mini), Anthropic (claude-sonnet-4-6), Ollama, and LM Studio. The provider is selected via the –llm command-line flag at startup. Ollama and LM Studio share an OpenAI-compatible base class; Anthropic uses its own SDK.

Can the application be used without an internet connection?

Yes. By pointing the –llm flag at a local Ollama or LM Studio instance, the application runs entirely offline. The bundled GeneralUser GS soundfont also means no network access is needed for audio playback.

What is the close-voicing algorithm?

The close-voicing algorithm builds a chord from the root upward, placing each note as close as possible above the previous one. Whenever a candidate note would fall at or below the previous note, it is bumped up by an octave. The result is a compact, musically natural voicing without any manual arrangement.

How are the tests structured?

Tests cover the theory layer (chord parsing, pitch class arithmetic), the MIDI mapping (close voicing, slash chord bass placement), and the LLM validation logic (diatonic membership, borrowed chord labels, rejection rules). All tests are deterministic and require no audio hardware or API credentials to run.